db-agent

An open-source text-to-SQL AI agent for the modern lakehouse. Ask questions in plain English, get back validated SQL that runs against Databricks Lakebase, Unity Catalog, Postgres, MySQL, or Snowflake.

Presented at AAAI-25. Ships a Databricks-Apps deployment variant, a Streamlit UI, and a pluggable LLM endpoint that can target any OpenAI-compatible inference server.

The live demo runs against a sample Olist e-commerce dataset on Streamlit Community Cloud — ask in plain English, see the generated SQL and the rows it returned.

Multi-data-plane

Query Databricks Lakebase (Postgres OLTP), Unity Catalog Delta (OLAP), Snowflake, and MySQL through one schema-aware agent. Lakehouse Federation joins handled at the warehouse, not in the agent.

SELECT-only safety guardrail

Every generated SQL statement is validated before execution. Blocks DROP, DELETE, UPDATE, INSERT, ALTER, plus Databricks-specific OPTIMIZE, VACUUM, and ZORDER. Word-boundary regex avoids false positives.

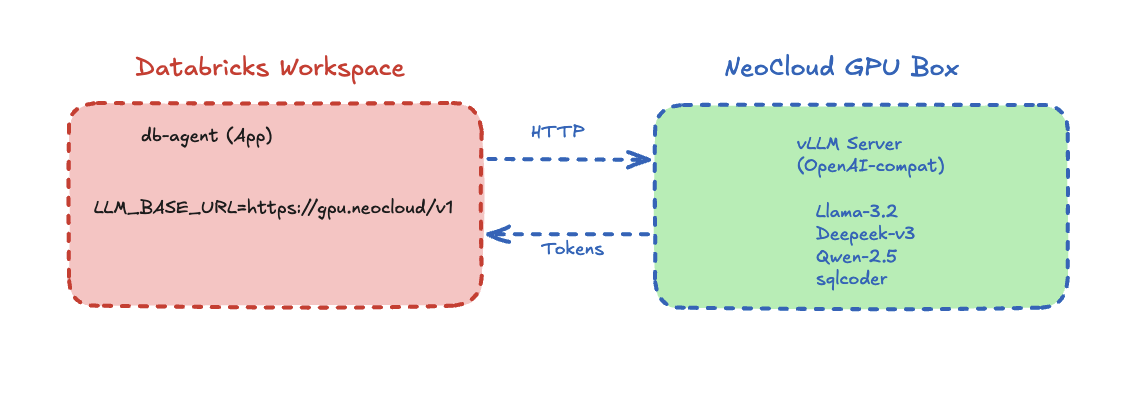

Pluggable LLM endpoint

One env var (LLM_BASE_URL) points the agent at Databricks Model Serving, OpenAI, Azure, GitHub Models, or a self-hosted vLLM server on a neo-cloud GPU. Swap inference providers without touching application code.

How it works

A deterministic pipeline — five steps in order, no autonomous loops, no surprise LLM bills. Every question produces exactly one LLM call and one SQL statement.

Schema

Reads INFORMATION_SCHEMA across all attached data planes.

Prompt

Schema-first prompt template — types before query shape.

LLM

OpenAI-compatible POST. Returns structured JSON.

Validate

SELECT-only check. Blocks unsafe SQL before it runs.

Execute

Runs against the warehouse with a row-cap. Returns rows + SQL + explanation.

Quick start

Clone the repo, set two environment variables, and run the Streamlit UI locally — or deploy as a Databricks App.

git clone https://github.com/db-agent/db-agent cd db-agent pip install -r requirements.txt export LLM_BASE_URL="https://api.openai.com/v1" export LLM_MODEL="gpt-4o-mini" export DATABRICKS_HOST="..." export DATABRICKS_TOKEN="..." streamlit run databricks_app/app.py

Want the end-to-end Databricks Lakebase + Unity Catalog walkthrough? Open the companion lab

Built by beCloudReady

Want this wired up against your data?

We help engineering teams deploy text-to-SQL agents against their own Databricks workspaces, with the safety, governance, and observability they need for production.